We often think of driving in binary terms — either a human is driving a car 100% of the time, or a computer is driving a car 100% of the time, i.e., autonomous vehicles. In most cases, the reality is actually more of a hybrid. As we discussed in a previous article, this makes perfect sense — an autonomous vehicle (AV) can encounter almost infinite scenarios while driving. Although the AI in the driver’s seat should ‘understand’ most situations encountered on the road, there will always be outliers that it can’t interpret. These situations require human intervention, i.e. autonomous vehicle teleoperation.

Teleoperation is the technical term for the operation of an unmanned machine, system, or robot from a distance. In AV teleoperations, remote operators sit in a control center, either fully driving a vehicle (“direct driving”), serving as a remote safety driver, or ready to assist when an AV requires guidance to handle a specific challenge – or a situation in which the level of confidence of the AV as to what needs to be done in a particular circumstance – is insufficient (also known as “high-level commands”).

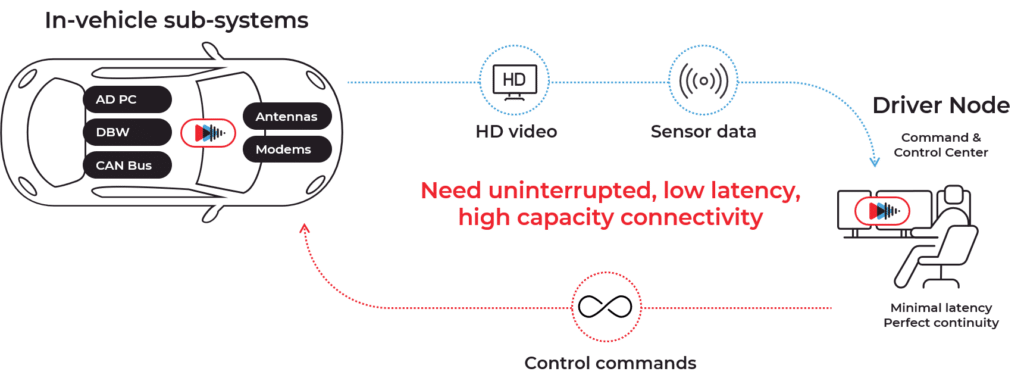

In order to determine the state of the world around the vehicle to support the decision to be made, the remote operator needs to “see” through the “eyes” of the vehicle. This means that video and other data captured by cameras and sensors on the vehicle need to travel between the vehicle and the remote operator.

Specifically, teleoperation relies on sensor data, high-quality video, and audio. The most important and sensitive data stream is video – real time, accurate, and continuous video is paramount to remote driving, and it hinges on the availability of a constant, robust connection between the moving vehicle and the remote operator at the command center.

What is required for autonomous vehicle teleoperations?

By definition, remote drivers are far removed from the vehicles they control or guide. Teleoperation requires the information captured by the vehicle sensors to be packaged, and transmitted over cellular networks to be displayed at the remote operator station. All these actions take time.

The time lapse between the moment real life events are captured by the vehicle sensors and the time they are displayed on the screen of the remote operator is known as “glass-to-glass” latency” (as opposed to network latency, which refers only to the network itself).

High latency can be a problem in any AV use case, whether it’s robotaxis, shuttles, delivery robots or trucking. If the latency is too high, by the time the operator responds to a given situation, it will have changed and the response will be irrelevant at best, and dangerous at worst. In order to properly respond to situations while remotely driving a vehicle at high speed, the glass-to-glass latency requirement is 100 milliseconds or less.

The complete and real-time presentation of 4K video, audio, and sensor data to the remote driver, and the speed at which driving commands are returned to the vehicle, are the cornerstone of reliable teleoperation.

Can public cellular networks and standard video technologies deliver the level of latency and reliability required for teleoperation?

A few words about cellular networks and connectivity

Teleoperation of AVs relies on cellular networks to transmit information to and from the control center. However, cellular networks connectivity is dynamic by nature for several reasons:

- Network design – antenna location, density and transmission technology.

- Cellular networks rely on radio waves for transmission, which can be blocked or weakened by obstructions such as buildings or even cars and trucks. Modern cellular networks are designed to overcome such barriers and provide coverage without line-of-site in most locations, yet the fact of the matter is that signal strength fluctuates and coverage is dynamic.

- When in motion, connection to the network is handed over from one base-station to another, a handoff that sometimes involves a drop in connectivity levels. By nature, a moving vehicle experiences frequent cell handovers as it enters and exits areas of reception of different base stations.

- Other factors that impact the available bandwidth capacity include distance from the tower (antenna), the number of connections in a specific cell at any given time, and overall congestion in the backhaul.

- Finally, network operators make tweaks and changes to the network on an ongoing basis, meaning capacity can change unexpectedly even in locations that are known to have good connectivity.

This image shows the upload and download speeds on a single network during a drive in Tel Aviv, which is a highly connected city. As you can see, the fluctuations are extreme.

For all the reasons described, it’s extremely hard to guarantee consistent, high-quality, and low-latency connectivity using a single network or channel. Even if the desired level of connectivity is currently available, a change can occur – a tall building, cell handover, more users – and suddenly it’s not enough. A sudden drop can cause a gap in reception, leading to a delay or even loss of data packets. That’s why it isn’t safe to depend on one modem, even if it’s 5G.

Achieving high-quality video

High-quality, real-time video is the most critical element for safe teleoperation.

4K video is encoded to rapidly convert raw footage into a format that can be instantaneously transmitted over cellular networks. It’s then decoded on the remote operator’s driving terminal, allowing them to operate as if they were in the vehicle.

Video encoding technologies deployed for use on cellular networks are designed to optimize mass consumer video consumption; however, for teleoperations, encoding needs to be optimized for low-latency scenarios.

An encoding bitrate of 4-10 megabits per second is typically required to transmit high-quality video with H.265 encoding. This of course depends very much on the scene; H.264 encoding requires a 30-50% higher bit rate than H.265.

But reaching a constant high speed connection is very difficult. Congestion, lack of coverage in a certain area, changes to the network, or any of the issues discussed above could cause fluctuations that are detrimental to teleoperation.

In addition, networks are designed to prioritize downloads to serve consumer data consumption – the average smartphone user wants to watch a streamed video or scroll their feed on the bus. Uploads are usually very short “stories”

In teleoperation, however, priorities are the exact opposite. Upload speed is the critical factor as it is the video used by remote operators to understand what is happening in the field. Download capacity use is lower as it’s only used for control commands.

Video and network latency

Video requires all data packets for encoding. Thus, packet loss or fluctuations in latency create difficulty in presenting the video properly.

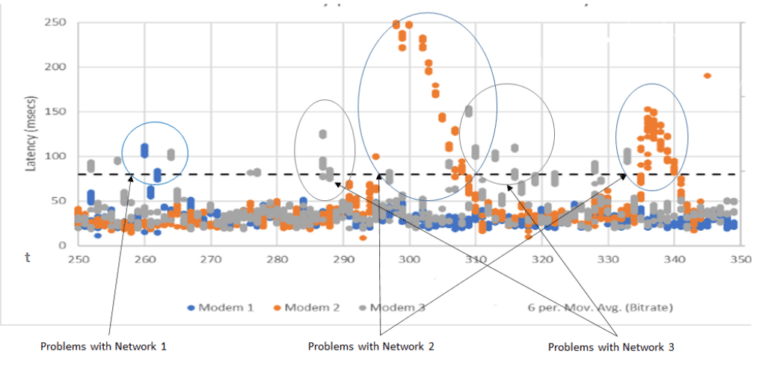

The above diagram shows latency on three different networks over a period of four seconds. The transmission pace is consistent and there is no congestion – the bandwidth is sufficient. Still, no one network can give low enough latency all the time. Each network has lapses at some point. Each lapse or lag means that video can’t be displayed and teleoperation cannot occur, leaving the vehicle with no way to manage situations outside the scope of AI and machine learning, once again demonstrating how dangerous it can be to depend on a single network for teleoperation.

Conclusion

High-quality, continuous and low-latency connectivity is a mission-critical element of autonomous vehicle teleoperation, which is, in turn, a key enabler of AV deployments.

No single network can provide the connectivity required for autonomous vehicle teleoperation – not even 5G.